Technology · Infrastructure

Project 0: The Big Computer

Space datacenters. On Earth. In Lithuania. A blueprint for building modular, truck-deployable GPU clusters across Europe - starting with €3 million and a single site. And why we're building for what comes after the LLM era.

A concept in active development by Tadas, Žygimantas, Ilona, Artūras, Vakarė, Rokas, Airė, Syver, Marius and more · March 2026

Here is the idea. You build a datacenter the way aerospace engineers build satellites: ruggedized, compact, self-contained, ready to withstand temperature swings, designed for minimal human maintenance, fits on the back of a truck. You make it ready to go to space.

And then - you don't send it to space.

Because space is expensive, and trucks are cheap. You drive it to wherever electricity costs five cents per kilowatt-hour and fiber runs underground. You plug it in. You watch it print money. When the power utility gets greedy, you don't call lawyers. You call a driver.

This is Project 0. A European compute company built from first principles, operating out of Lithuania - with the explicit goal of building the largest independent AI compute cluster on the continent, without selling equity to a VC fund, without paying NVIDIA's 80% margin tax, and without waiting for someone in Brussels or San Francisco to give permission.

The minimum viable version costs €3 million. The path to €1 billion in compute capacity is fully mapped. And it starts with one truck, one site, and one very good AMD GPU.

But this article isn't just about infrastructure economics. It's about why the infrastructure matters - and what it needs to be capable of. Because the AI paradigm is about to shift, and the shift requires compute that nobody in Europe is currently building.

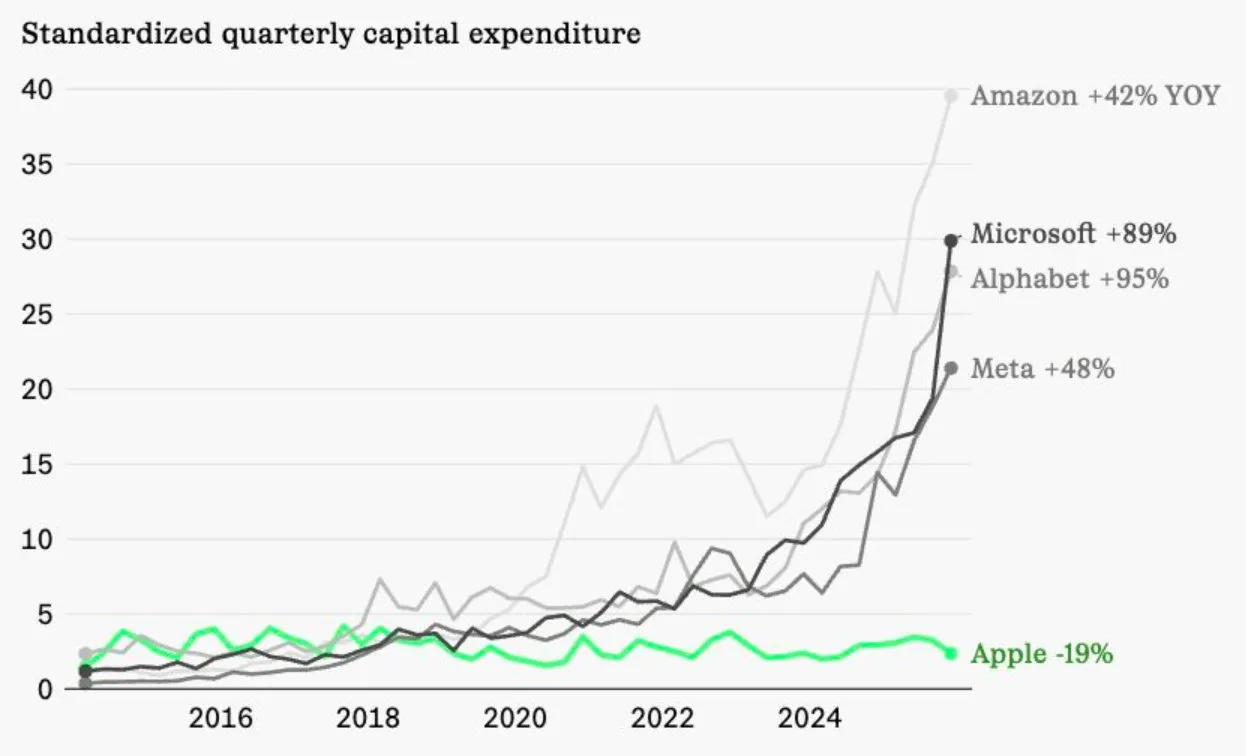

The $2 billion signal: LLMs are advanced autocomplete, and the smart money knows it

In three weeks at the start of 2026, two of the most credentialed AI researchers alive raised a combined $2 billion - not to build better language models, but to bet against the entire LLM paradigm.

Fei-Fei Li closed $1 billion for World Labs on February 18. Yann LeCun closed $1.03 billion for AMI Labs shortly after. Both are building world models. Both are publicly arguing that the entire generative AI gold rush is, at its core, a statistical parlor trick - sophisticated pattern-matching dressed up as intelligence.

The investor overlap is not coincidence. Nvidia backed both. So did Sea and Temasek. Look at who else is on the AMI cap table: Samsung, Toyota Ventures, Dassault. These are companies that need AI to understand physics, geometry, and force dynamics. A language model that can write poetry is worthless to a robotics company trying to predict what happens when a mechanical arm applies 12 newtons at a 30-degree angle to a flexible surface.

LLMs vs World Models

Two paradigms. One optimized for text. One optimized for physical reality. $2B raised in three weeks is betting on the second one.

LLMs are great at…

Summarization, code, creative writing, chat. Anything that maps to statistical patterns in human language. Deployment-ready today. Commercially dominant.

World Models target…

Physics, force dynamics, spatial geometry, causal reasoning. What happens when a mechanical arm applies 12N at 30° to a flexible surface. LLMs guess. World models compute.

The math on AMI is, on the surface, absurd. $3.5 billion pre-money valuation. Four months old. Zero product. Zero revenue. The CEO said on record that AMI won't ship a product in three months, won't have revenue in six, and won't hit $10M ARR in twelve. He described it as a "long-term scientific endeavor." Investors gave him a billion dollars anyway.

This tells you exactly how the smart money is modeling AI's future. They are not pricing AMI on a revenue multiple. They are pricing it on the probability that LLMs hit a ceiling- and on the bet that the companies building on top of GPT and Claude for physical-world applications have bought the wrong foundation.

LeCun raided his own lab to build this. Mike Rabbat - Meta's former research science director. Saining Xie from Google DeepMind. Pascale Fung, senior director of AI research at Meta. He walked into Zuckerberg's office in November, said he was leaving, and four months later half of FAIR works for him. Meta is reportedly partnering with AMI anyway - which means Zuckerberg thinks LeCun might be right even while Meta keeps scaling Llama.

AMI's first partner is Nabla, a medical AI company building toward FDA-certifiable agentic AI. That's the use case that makes world models existential. LLMs hallucinate. In healthcare, hallucinations kill people. You cannot prompt-engineer your way out of a model that generates statistically plausible text when you need a system that actually understands how a human body works.

Why world models need Project 0

World models don't just need more compute than LLMs - they need a different kind of compute. Training a model that understands physical dynamics, spatial geometry, and causal chains across time requires massive parallel simulation capacity. You cannot rent this from AWS at a cost that lets an independent research lab survive. You need owned, efficient, EU-sovereign hardware that can run 24/7 for months without a $50 million cloud bill showing up halfway through a training run.

LLM training (GPT-4 class)

~€50M cloud cost

World model training (est.)

~€150–300M cloud

Same run on owned hardware

~€8–25M all-in

This is why Project 0 is not just an infrastructure play. It is a bet on a specific architecture transition - one that the two most credentialed researchers in the world just put $2 billion behind. When world models go from research lab to production, the compute they run on needs to be sovereign, efficient, and physically in Europe. We intend to build that infrastructure before anyone else does.

Why "Project 0"?

Zero-indexing is the honest way to count: you start at zero, not one. Most European tech companies start at one - with the first pitch deck, the first VC meeting, the first press release. We start at zero, which means starting with the machine itself.

The insight crystallized while watching American AI infrastructure debates from Vilnius with growing frustration. The honest roadmap in AI - "buy a site and wait for the next-generation card" - is practically impossible in the US right now. Power in San Diego costs €0.35/kWh. Permitting takes years. Utilities reprice the moment they detect AI load. There should be a competent government that builds out infrastructure to help businesses succeed. There isn't one. So instead of fighting that battle, we're fighting a different one: in Lithuania, where the numbers actually work.

The mobility model solves the remaining problem. You don't buy a building and pray the utility plays fair for ten years. You lease a concrete pad. When economics shift, you put the container on a flatbed and go to the next site. No lawyers. No asset write-downs. Just logistics.

Power: why the Baltics win

Before hardware, before software, before business model - power economics determine whether an independent compute company is viable at all.

Industrial Power Cost Comparison

€c/kWh for large-load commercial customers. Running a 500 kW cluster in San Diego costs 7× more than in Lithuania.

Worst (San Diego)

€0.35/kWh

€1.53M/yr

Project 0 (Lithuania)

€0.05/kWh

€219k/yr

Savings vs SD

€1.31M/yr

per cluster

Sources: Eurostat energy statistics Q4 2025, EIA commercial rate data, industrial tariff filings. Rates shown are indicative all-in costs for ≥500 kW continuous-load customers.

Running a 500 kW cluster at €0.05/kWh in Lithuania costs approximately €219,000 per year. The same cluster in San Diego: €1.53 million. That €1.3 million annual gap is not a line item. It is the entire difference between a viable business and a money pit.

The Baltic grid is structurally stable: Lithuania desynchronized from the Soviet-era BRELL ring in 2025, joined the Continental European Network, and Ignitis is aggressively expanding wind capacity. Green power certificates are available and cheap. Estonia and Latvia offer comparable rates. Finland's hydro and nuclear capacity is a short interconnect away.

A business plan that works at €0.06/kWh is competitive for the foreseeable future. And unlike the American situation where a utility can hold a datacenter hostage, our mobile architecture means we can always vote with our wheels.

The hardware: AMD RDNA5, and the 0grad software stack

NVIDIA builds extraordinary chips and charges extraordinary prices. H100s carry roughly 70–80% gross margins. Competing on NVIDIA iron means paying the NVIDIA tax permanently.

AMD's RDNA5 generation - targeting mid-2027 - is expected to launch 96GB VRAM cards at approximately €2,000–2,500 each. Six cards per node gives 576GB of unified GPU memory: enough to run every relevant open-weights model at full FP16 speed today, and a serious candidate for world model inference workloads tomorrow.

The inference and training software is 0grad - our ML framework that owns the AMD kernel layer directly rather than depending on ROCm. 0grad talks to GPU metal. When 0grad is faster, the business makes more money. That feedback loop is unusually clean, and it compounds. By the time RDNA5 ships in mid-2027, we will have twelve months of kernel work specific to that architecture that no one running vLLM on ROCm will have replicated.

Each 0-box is a 6-GPU ruggedized chassis. Forty-eight 0-boxes make one 0-stack - a full cluster in a 40-foot container drawing ~500 kW, deployable in 72 hours on any site with a 630A three-phase connection and a fiber handoff.

0-Stack Specs - per container

GPU nodes (0-boxes)

48

GPUs per node

6 × RDNA5 96GB

VRAM total

27.6 TB

Power draw

~500 kW

Build cost (RDNA5)

~€3M

Deployment time

72 hours

The token math: 500 nodes × 200 tok/s

The revenue model starts with simple arithmetic. Each 0-box node outputs approximately 200 tokens per second on large MoE models. 500 nodes × 200 tok/s × 2,592,000 seconds/month = 259.2 billion tokens per month. At €0.30 per million tokens, that is €77,760/month from a single 0-stack at full utilization. OpenRouter processes roughly 25.9 trillion tokens monthly; our full build is about 1% of that market.

Token Throughput Calculator

Each 0-box node runs at ~200 tok/s on current open-weights models. Select a cluster size to see the math.

Total GPUs

288

48 nodes

Aggregate tok/s

9.6k

tokens/second

Monthly tokens

24.9B

0.10% of OpenRouter

Revenue (€0.30/M)

€7k

per month

The Math (selected preset)

nodes = 48

tok/s per node = 200

seconds/month = 60 × 60 × 24 × 30 = 2,592,000

tokens/month = 48 × 200 × 2,592,000 = 24.9B

revenue = 24.9B × €0.30/M = €7k/mo

openrouter share= 0.10% of ~25.9T monthly

European-hosted, GDPR-compliant inference commands a meaningful premium. Enterprise customers in healthcare, finance, and government - legally barred from sending sensitive data to US APIs - pay €0.40–0.60/M for regulatory clarity alone. The base case uses €0.30/M. Upside is real and not dependent on market-share heroics.

Longer term: as world model inference workloads mature, the memory-bandwidth profile of AMD's RDNA5 architecture is a better fit than NVIDIA's compute-optimized silicon. This isn't a speculative bet - it's what happens when you optimize for the workloads that the $2 billion being invested in world models actually needs.

Business model: three revenue streams, 80% gross margin

1. Token API sales. Open inference for European enterprises. Target: €62–77k/month per 0-stack at 80% utilization.

2. Colocation revenue. Dedicated GPU-hour reservations for AI startups wanting guaranteed capacity without operating their own hardware. The cheapest colo in the EU, with GDPR data processing agreements. Target: €30k/month per 0-stack.

3. EU grant leverage. The European Commission has earmarked funding for "sovereign AI infrastructure" under Horizon Europe and the AI Factories initiative. A Lithuanian-registered company deploying EU compute is a strong fit. Grants accelerate the timeline; they don't fund the base model.

Combined: €92–107k/month gross revenue against €22k/month opex - ~80% gross margin at scale. Hardware pays off in under three years from first power-on.

Self-replication: one container becomes ten

Self-Replication Revenue Model

Each 0-stack funds the next one. Revenue by stream over 36 months - no external capital after the seed round.

M36 gross revenue

€368k/mo

M36 net profit

€280k/mo

Clusters @ M36

4 × 0-stack

Model assumes 80% utilization, €0.30/M token pricing, €0.06/kWh power, 4-month ramp per cluster. EU grant disbursements modelled as two tranches (M6–9, M20–23). No RDNA5 upgrade capex included.

The first 0-stack requires €3–5 million. Each subsequent cluster is funded by the revenue from the one before it. The business compounds on itself - no additional external capital required after the seed round.

We're shipping tokens in month one. Profitable by month six. Deploying cluster two from cashflow by month eighteen. The upside scenario: with 0grad improvements yielding 3× throughput on RDNA5 and three clusters running, monthly revenue reaches €5.4 million per month.

The modular philosophy: lease a plug, not a building

We lease sites, not buildings. Requirement from a site operator: a concrete pad, a 630A three-phase industrial connection, and a fiber handoff. We deliver the container, plug in, and begin generating revenue within 72 hours. If economics shift, we load the container on a flatbed and redeploy within a week.

Three initial sites identified in Lithuania: near Elektrėnai, outside Vilnius co-located with an existing telecom facility, and in Kaunas near the free economic zone. All available for under €3,000/month.

For site operators elsewhere in the EU: you provide the concrete, the big plug, and the small plug. We deliver a 0-stack and pay a fair rate. Actively looking at sites in Poland, Latvia, and Finland.

European AI sovereignty: the tailwind nobody's pricing in

GDPR Article 9, the EU AI Act, and sector-specific data residency requirements mean that a meaningful fraction of European enterprise AI workloads cannot legally run on US infrastructure. Healthcare data, financial models, and government AI systems all face restrictions that effectively require local compute. The supply side of this market is thin.

As world models move from research labs to production - first in medical AI, then in robotics, then in automotive - the EU-sovereign requirement becomes more acute, not less. A world model trained on patient imaging data cannot leave EU jurisdiction. A robotics training run for a German automotive OEM cannot route through Oregon. Project 0 is the only independent compute provider in the EU building toward this capacity.

No VCs. Here's the precise reasoning.

VC funds have a legal obligation to their LPs to maximize returns on a 7–10 year cycle. For a compute infrastructure company, this creates incentives that are structurally misaligned with building something durable. Genuine 100× outcomes don't come from hype. They come from building something so valuable that the world rearranges around it - and that takes time, iteration, and technical depth.

We raise from mission-aligned individuals - engineers, operators, and technical founders who want to see the machine get built. Minimum check: €100,000. Maximum first-round raise: €5 million. Normal shares. Normal rights. Clean cap table. Lithuanian UAB. Audited accounts quarterly. Board seat at €1M+. No crowdfunding. No ICO. No governance tokens.

Money is a map. The machine is the territory. We're building the machine.

The roadmap: one container to 20 exaflops

Project 0 milestones

The point of all this

Current LLMs are, at their core, extraordinarily sophisticated autocomplete. They are useful - genuinely, commercially, broadly useful - and the business case for running them efficiently in Europe is real and immediate. But the $2 billion bet placed in three weeks at the start of 2026 says clearly: the world needs AI that doesn't just predict the next word, but understands why the next thing happens. Physics. Geometry. Causality. The architecture for that doesn't exist yet at production scale. Building it will require an insane amount of compute - owned, efficient, sovereign compute - run for months or years before it produces anything commercially legible.

Project 0 is built for the present and the future simultaneously. In the present: we run LLM inference cheaply in Europe, generate revenue, and compound. In the future: we are the infrastructure that world models in Europe run on - because we'll have the sites, the hardware, the software, and the operational track record when that moment arrives.

One container. One plug. One truck on standby.

Imagine commanding 20 exaflops. Imagine watching a thousand silicon minds come to life in a box - and then a different kind of mind, one that actually understands the physical world, running in the same box five years later.

The machine is the territory. We're building the machine.

Project status

In active consideration - calculations ongoing

Project 0 is currently in the detailed planning and feasibility phase. Site assessments are underway. Hardware cost models are being validated against current AMD supply chain data. Revenue projections are being stress-tested against real European API pricing benchmarks. The team is working through the full financial model before committing to a raise timeline. Nothing is final. Everything is being built carefully.

Calculations and concept by

Tadas · Žygimantas · Ilona · Artūras · Vakarė · Rokas · Airė · Syver · Marius · and more

If you have a site with cheap power and good fiber anywhere in the EU and want to host a 0-stack, or if you're a mission-aligned investor who wants to see the big computer get built - reach out. Minimum check €100k. No VCs. No governance tokens. Just the machine.